The Restraining Order: Divorcing Tailscale from the Data Path

The Restraining Order

In which we divorce Tailscale from the data path, discover that etcd has trust issues, accidentally power-cycle an entire city, and learn that the backup network is better than the primary.

There is a particular flavor of systems engineering hubris that says: “I will just swap the networking layer underneath a running distributed database. On a Tuesday. Over breakfast.”

This is the story of that Tuesday. And Wednesday. And Thursday.

The Toxic Relationship

For thirteen months, our K3s cluster ran on Tailscale. Eighteen nodes across four regions — Austin, LA, Torrance, Tokyo — all connected through WireGuard tunnels coordinated by Tailscale’s control plane. It worked. Mostly.

The “mostly” was doing a lot of heavy lifting in that sentence.

Tailscale’s DERP relay servers are the networking equivalent of a couples therapist who charges by the millisecond. When two nodes can’t find each other directly (which happens more than you’d think with residential NAT), traffic routes through Tailscale’s relay infrastructure. Your etcd heartbeat from Tokyo to Austin? That’ll be Tokyo → relay in Dallas → Austin. 135 milliseconds plus jitter, please and thank you.

etcd’s election timeout is one second. Do the math on how many DERP relay hiccups you need before a leader election cascade turns your cluster into an expensive space heater.

The RPi4 control planes were the canary. Eight gigs of RAM sounds reasonable until Tailscale’s tailscaled process decides it needs 40% of it. On a machine running etcd, the K3s API server, and containerd, that’s not “coexistence” — that’s a hostile takeover.

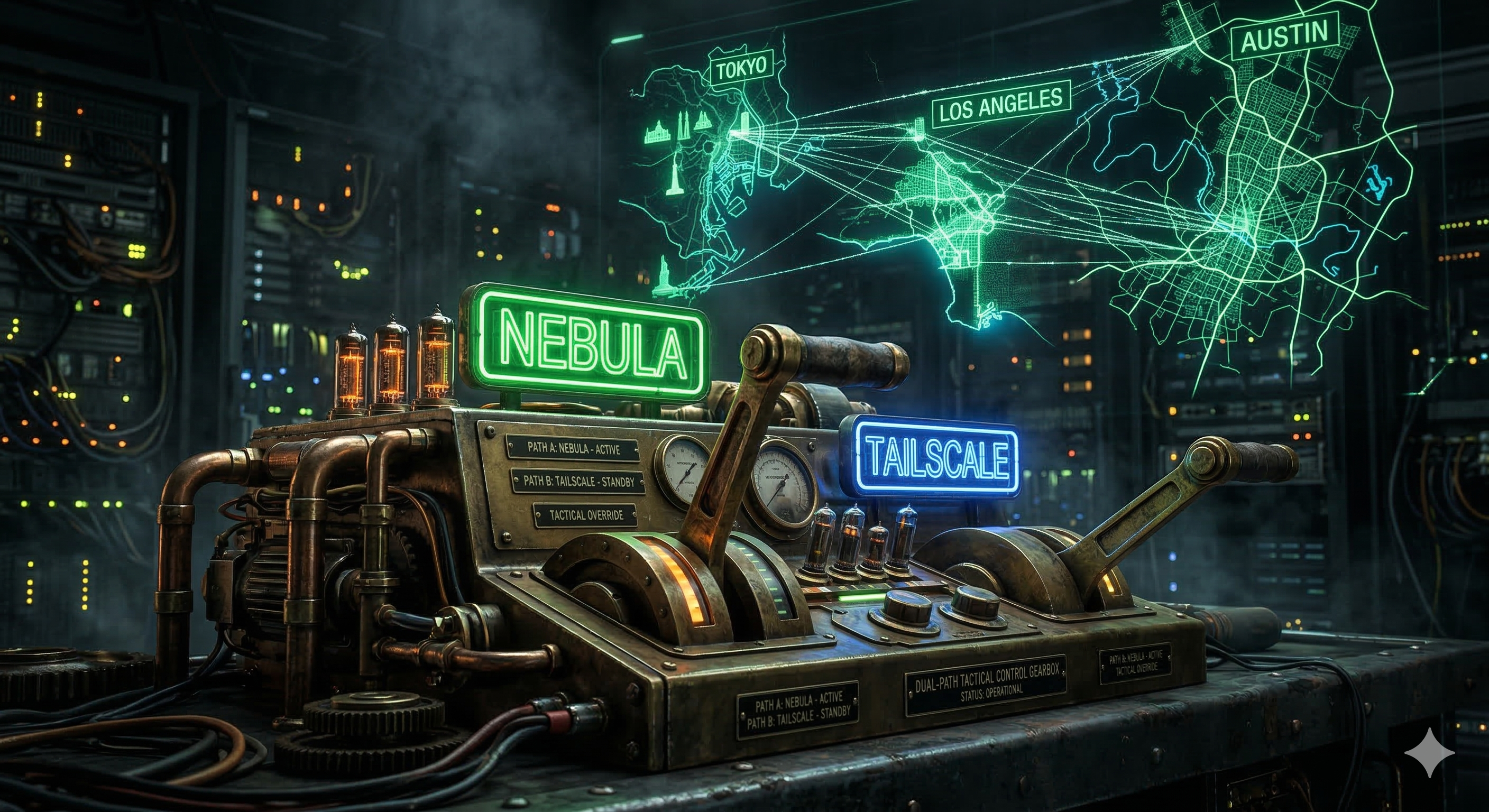

The Shadow Mesh

A week before The Migration, we’d deployed Nebula — Slack’s open-source overlay network — as a “shadow mesh.” The idea was simple: build a second network layer, verify it works, then migrate traffic onto it. ShortCircuit, we called it, because we’re exactly the kind of people who name their overlay networks.

Two lighthouses (SFO3 on a DigitalOcean droplet, plus a Mac Mini in Austin), certificates generated on the lighthouse, distributed via Ansible slurp. The deployment went… let’s just say it has its own postmortem.

But after the initial excitement of taking down the entire cluster by setting MTU to 1200 (turns out SSH packets are bigger than 1200 bytes — who knew?), the shadow mesh stabilized. Every node had a nebula0 interface sitting there quietly, routing packets at 172ms trans-Pacific instead of Tailscale’s relay-dependent 1,020ms.

Time to cut the wire.

The Plan Was Perfect

The migration plan was straightforward:

- Workers first (13 nodes): Change

--flannel-iface=tailscale0to--flannel-iface=nebula0, update--node-ipto the Nebula address. Drain, edit, restart, uncordon. Serial: 1. - Control planes (5 nodes): Same thing but with extra care because etcd.

- Update inventory: Point everything at Nebula IPs.

- Decommission Tailscale: Weeks later, after stability is proven.

The workers went fine. Thirteen nodes, one at a time, zero drama. Flannel rebuilt its VXLAN tunnels over nebula0, pods kept running, the only interesting moment was discovering that flannel.1 doesn’t auto-switch its parent interface — you have to delete it and let K3s recreate it. A minor ip link del flannel.1 never hurt anyone.

etcd Has Trust Issues

Here’s something the K3s documentation doesn’t tell you: when you change --node-ip, K3s changes the etcd peer URL to match. Your etcd member that was advertising https://100.67.67.67:2380 now wants to be https://10.167.6.7:2380. Reasonable.

etcd peer certificates are generated at first boot with only the original IP in their Subject Alternative Names. When another etcd member tries to connect to your new Nebula IP, mutual TLS looks at the certificate, sees only the Tailscale IP in the SANs, and says: "tls: 10.167.6.9 does not match any of DNSNames".

Imagine showing up to your own apartment with a valid key, but the doorman doesn’t recognize your face because you got a haircut. That’s etcd with a new IP.

The breakthrough: K3s’s --tls-san flag adds SANs to both the API server certificate AND the etcd peer certificates. Discovering this allowed us to do a two-pass cert regeneration before cutting the networking wire. It was the only reason the cluster survived the first 24 hours.

The Power Cycle Incident

With four of five control planes migrated and running beautifully on Nebula, we hit a snag: sec-01 (the Kali node in Austin) couldn’t reach aus-ctrl-01 via the mesh. Both on the same LAN. Both talking to the lighthouse fine. Just couldn’t talk to each other.

“I can power cycle it,” the operator said. “I have a smart plug.”

What the operator had was a smart plug connected to the power strip that fed the entire Austin rack. Eight nodes. Every Austin node went dark simultaneously. The migration survived an unplanned rack-scale failure because Tokyo, LA, and Torrance held the quorum line.

The 80-Hour Siege

The migration post was supposed to end there. Instead, we entered the “Final Siege.”

We spent the next 48 hours fighting a Reconciliation Loop of Death. With the cluster reset to a single-node etcd on an RPi5 (toa-ctrl), the “Thundering Herd” of 17 agents simultaneously reconnecting triggered a CPU/IO spiral that crashed the API server every three minutes.

We discovered two “Invisible Barriers”:

- The Aero Ceiling: Our primary relay (MacBook) had an MTU of 1300, while the cluster was at 1350. Handshakes would pass (small packets), but TLS certificates (~1.5KB) were silently dropped.

- The Webhook Deadlock: Deleting pods to reduce load was blocked because the

mimir-rollout-operatorwebhook was down. The API server was trying to ask a dead pod for permission to kill other dead pods.

The Silent Master Strategy

To win, we had to go “Nuclear”:

- Silence the Mesh: Stop all 17 agents globally.

- The Virgin Master: Cordon the master, drain all workloads, and surgically delete every

ValidatingWebhookConfiguration. - NAT Breakthrough: Forward UDP 4242 directly to the master, giving the mesh a “front door” that bypassed the lossy relays.

- Serial Re-join: Start agents one-by-one with a 60-second “soak” between each.

The RPi5 finally breathed. Load dropped from 8.0 to 1.0. One by one, the regions returned.

The Dual-Path Fallback

The most useful thing to come out of the post-migration chaos wasn’t technical — it was operational. ssh-switch is a one-command tool we built to flip the entire SSH and Ansible control path between Nebula and Tailscale:

1

2

ssh-switch nebula # Normal operations — 10.167.x.x (Green)

ssh-switch tailscale # Emergency — 100.x.x.x (Blue)

It’s a symlink toggle on ~/.ssh/config.d/15-cluster. Ansible doesn’t care which network it’s using — it just resolves hostnames. When Nebula is down, you switch to the “Tailscale Backdoor,” do the work, and switch back.

The Scoreboard (Final)

| Metric | Before | After |

|---|---|---|

| Nodes on Nebula | 0 | 18/18 |

| etcd peer URLs | Tailscale (100.x.x.x) | Nebula (10.167.x.x) |

| Flannel MTU | 1230 | 1250 (Unified) |

| Trans-Pacific latency | ~1,020ms | ~172ms (Direct) |

| Outage Duration | 0h | ~78 hours |

| Webhooks Nuked | 0 | All of them |

| Smart plug incidents | 0 | 1 (Never again) |

What We Learned

1. Shadow networks are underrated. Keep your old network until the new one has survived at least one unplanned incident. Tailscale saved the cluster three times while we were trying to kill it.

2. Standardize your MTU globally. A 50-byte difference between a relay and a worker is an invisible wall that only stops the most important packets (TLS certs).

3. Single-node etcd on RPi5 is fragile. It can handle 18 nodes, but not 18 nodes and 250 pods trying to reconcile at once. Use cordon and drain to protect your master during recovery.

4. Webhooks are a circular dependency trap. If your API is struggling, delete your ValidatingWebhooks. You can always recreate them later, but they will kill your ability to recover a broken cluster.

5. Label your smart plugs. Seriously.

The ShortCircuit mesh runs on Nebula by Slack. The 80-hour recovery was performed by one human (Jay) and two AIs (Claudeus and Geminius) operating as the CGJ High Command. No etcd clusters were permanently harmed, though the RPi5 is still recovering from the stress.