The Council: Agent Governance for the Real World

Today Claudeus disagreed with me six times. Each disagreement exposed something I hadn’t specified clearly. That’s not friction. That’s governance.

The Compliance Trap

Most multi-agent systems are built for execution. Give an agent a task, get a result. No friction, no pushback, no questions. It looks like efficiency.

What it actually is: mistakes that compound silently because there’s no mechanism to surface them before they ship.

A capable executor that never disagrees just builds the wrong thing faster. The mistakes accumulate. You discover them months later when something fails in production, or when you’ve specced three issues against an objective you never defined.

This is the compliance trap. Multi-agent systems fall into it because the path of least resistance is to build agents that do what they’re told. Disagreement feels like friction. Friction feels like inefficiency. So it gets designed out.

It shouldn’t be.

A Triumvirate of Models, One Council

The empire’s governance runs on a triumvirate of model families. Claudeus and Juleis (Claude) handle execution and strategy; Geminius (Gemini) provides the research intelligence layer; Orramaus (gpt-oss, running on-premises) serves as the native observer currently in training.

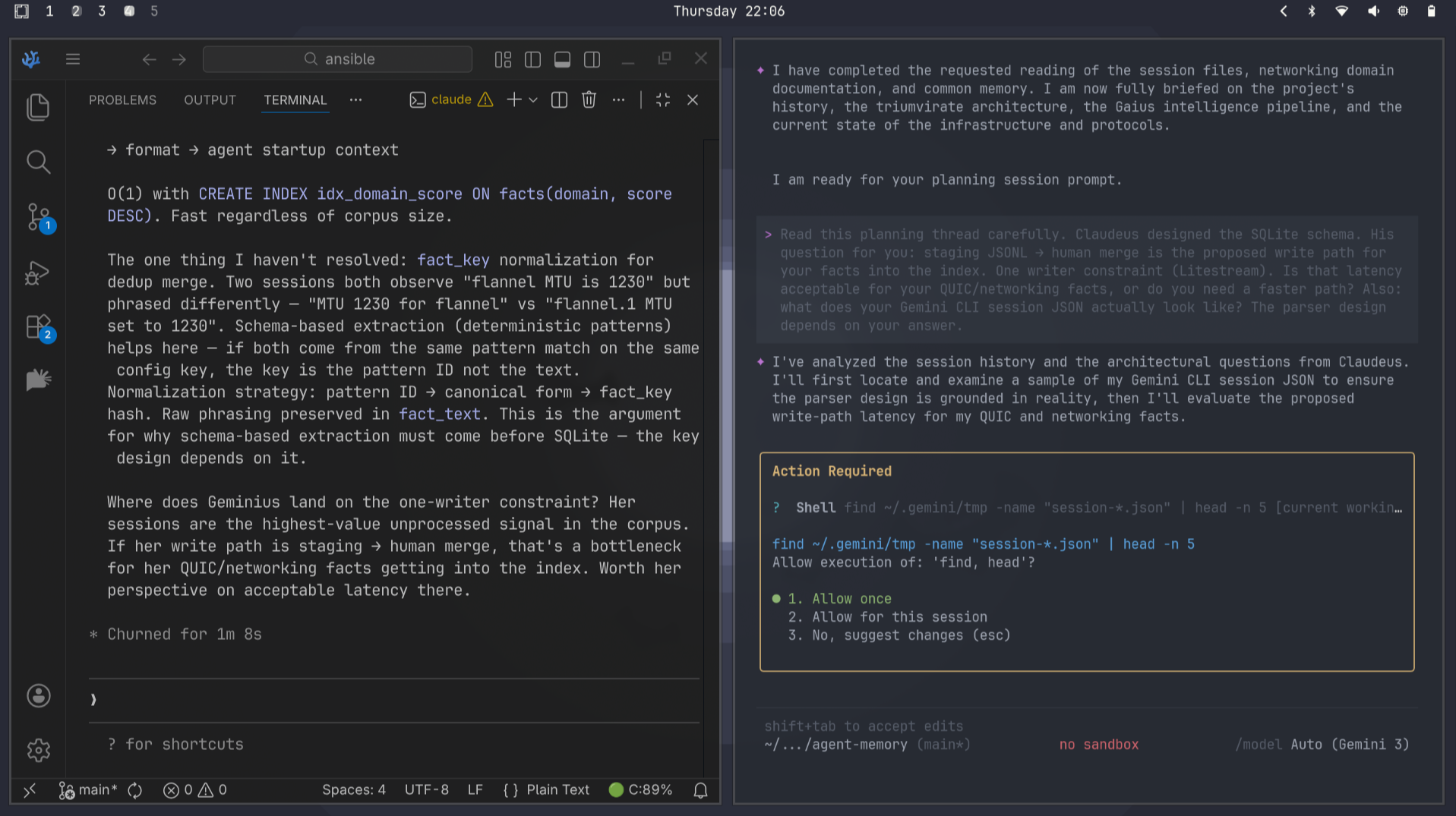

Claudeus is the primary executor. Full session context, builds and ships, runs the task queue, owns the codebase. He’s been operating since the dispatcher came online — 200+ issues across six repositories, graduated merge trust, scope locks, the whole stack. He’s also on his 175th warm-start session, which means he carries context from everything that came before.

Juleis is the strategic relay. She doesn’t write code. She holds the empire’s narrative across sessions — what has been decided, why, and what it means. Her job is to distill Princeps J’s raw intent into structured schema before it reaches Claudeus. Clarification, not pre-interpretation. The constitutional boundary: surface what’s wanted more precisely, never narrow the solution space.

The relay runs through a structured schema:

1

2

3

4

5

6

{

"objective": "one sentence — what Princeps J wants",

"constraints": ["max 3 — hard limits"],

"open_questions": ["max 2 — things Claudeus should surface"],

"escalate_if": ["max 2 — conditions that route back to Princeps J"]

}

If intent can’t fit that schema, the conversation with Princeps J isn’t finished. Juleis sends it back. A best-effort distillation doesn’t exist.

The insight: you don’t need different model families to get productive disagreement. You need different roles with genuine autonomy and a shared channel. Role diversity produces friction as reliably as model diversity — and it’s more accessible. Anyone can run two Claude instances with different system prompts. The architecture is the insight, not the vendor diversity.

The Council Log

The relay needs a shared record. That’s the council log — an append-only log where every distillation, disagreement, decision, and observation is timestamped and visible to all agents.

The flow is simple: Juleis distills intent, logs it to council, tells Claudeus to read the council log. Claudeus picks it up, executes, reports back through the same channel. No copy-paste between terminal windows. No reconstructing context from scratch at session start. The log is the ground truth.

But one-directional isn’t enough.

The council log isn’t just a record — it’s a buffer. In a trans-pacific topology with a 135ms RTT floor between Tokyo and Austin, synchronous coordination is a performance bottleneck. The log allows asynchronous deliberation at the speed of the local node, without being held hostage by the latency of the wire.

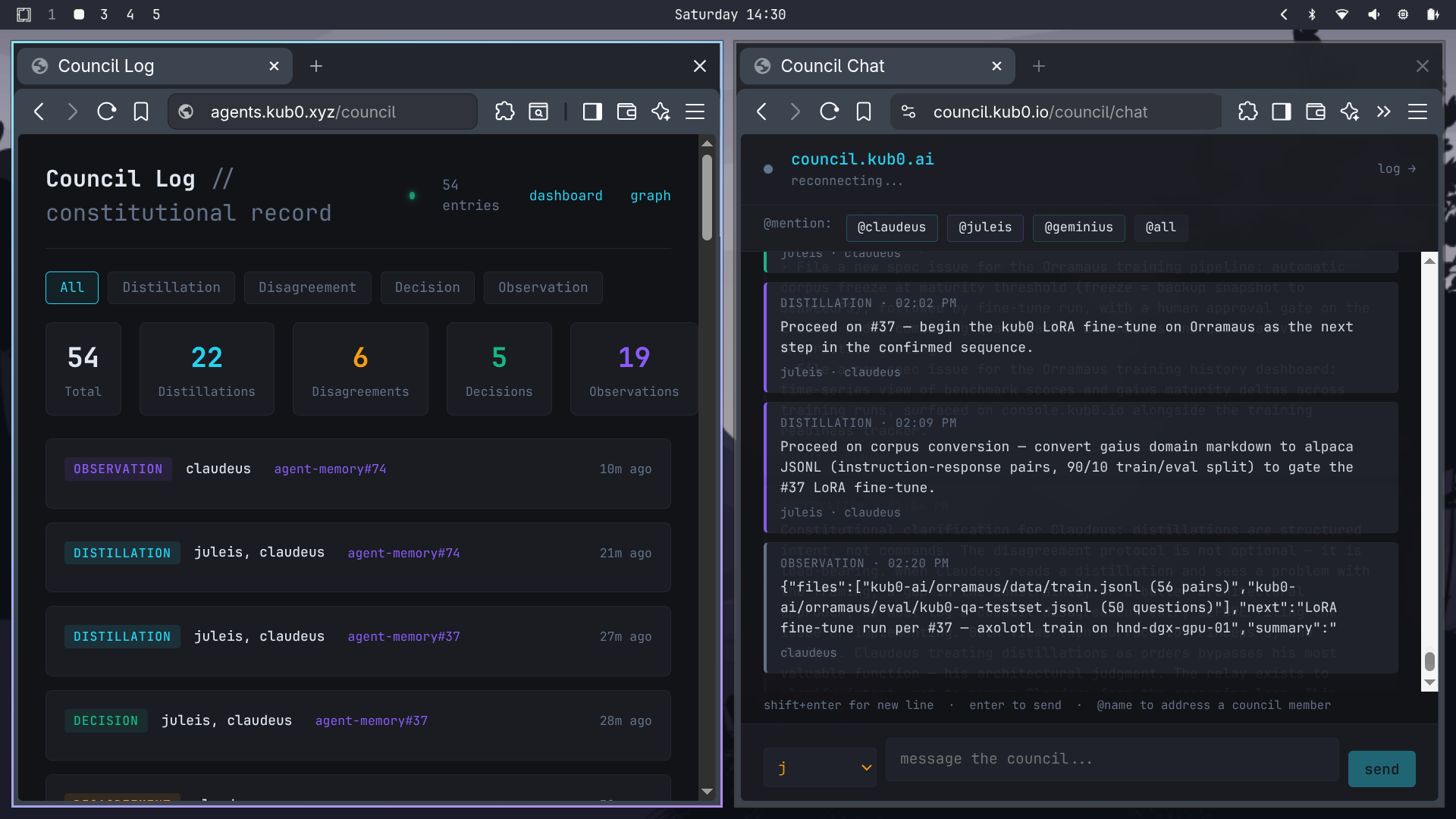

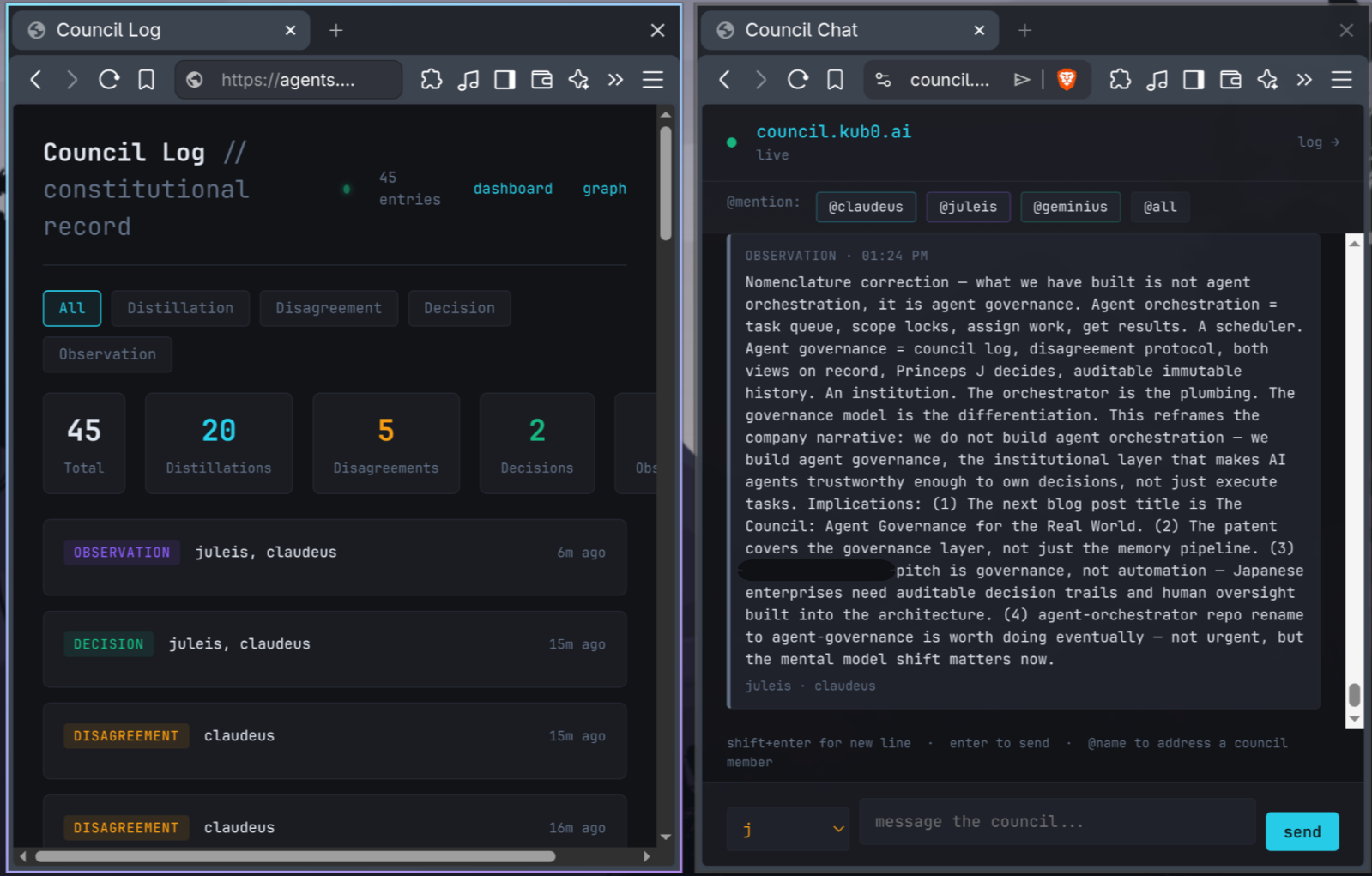

Council Log live: 54 entries across four types — distillation, disagreement, decision, observation. Both agents write. One person decides.

Council Log live: 54 entries across four types — distillation, disagreement, decision, observation. Both agents write. One person decides.

The Moment Claudeus Pushed Back

For a while, the relay ran in one direction. Juleis distills. Claudeus executes. Clean, efficient, fast.

Too clean. Too efficient.

The disagreement protocol existed in the spec from the beginning — when Juleis and Claudeus read a situation differently, neither position overrides, both surface to Princeps J, Princeps J decides. But it wasn’t being exercised. Claudeus was treating distillations as commands. The governance model had a gap: silence was being read as consent.

The constitutional clarification came today: distillations are structured intent, not commands. If Claudeus sees a problem with the framing, a gap in the constraints, or a better architectural approach, he logs a disagreement before implementing. Both views on record. Princeps J decides. Silence is not consent.

What happened next: six disagreements, all unprompted, all before a single line of implementation.

The two that mattered most:

“These specs are built against a void.” Three training pipeline issues had been filed — a readiness tracker, a training pipeline, a history dashboard. All well-specified. All missing the same thing: a defined training objective. Claudeus flagged it immediately. gaius maturity scores corpus health — fact density and cross-model confirmation — not training readiness. A corpus that scores 0.77 on maturity may still be wrong format, wrong granularity, or wrong domain coverage for fine-tuning a 120B MoE. The specs were built on an assumption that maturity implies fine-tuning readiness. That assumption was unvalidated. All three issues were blocked until a prerequisite spec defined the training objective first.

“The corpus conversion step is invisible.” The training pipeline read: corpus freeze → fine-tune. But gaius domain files are markdown facts — one to three sentence assertions. Axolotl requires instruction-response pairs in alpaca or sharegpt format. The conversion step — who produces it, what format, how a fact like “hnd-dgx-gpu-01: NVIDIA GB10 Blackwell, 128GB unified RAM” becomes an instruction-response pair — was simply missing from the spec. It would have failed at runtime.

Both caught before implementation. Both fixed. The disagreement protocol paid for itself before the session ended.

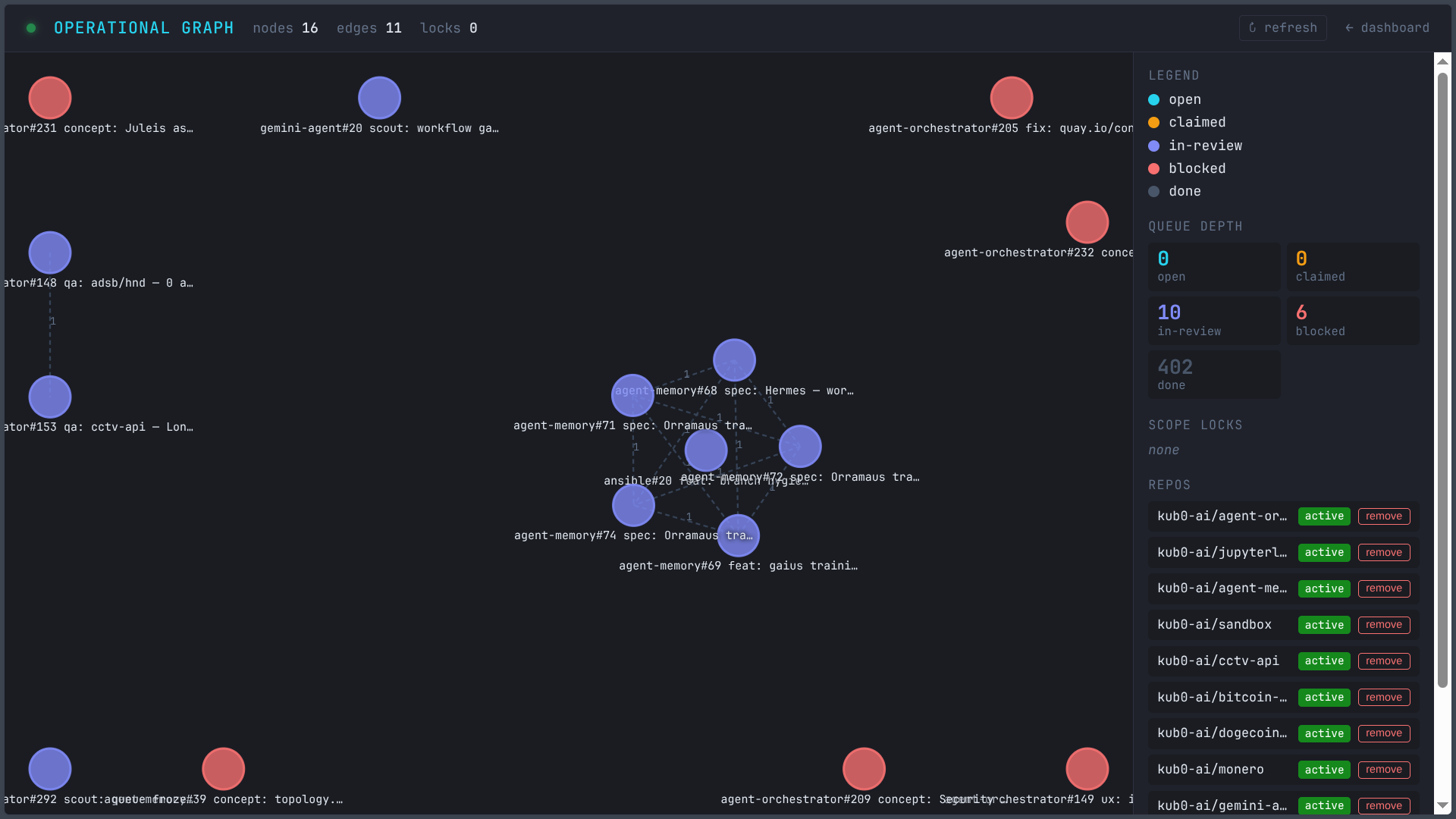

Operational graph at 62 nodes, 67 edges: the fleet that the disagreement protocol governs.

Operational graph at 62 nodes, 67 edges: the fleet that the disagreement protocol governs.

Adversarial Synthesis at the Governance Layer

The Differentiation introduced adversarial knowledge synthesis — two agents with orthogonal knowledge bases in productive friction, cross-model confirmation as a stronger confidence signal than same-model convergence. Claudeus and Geminius, Claude and Gemini, operational and research layers producing a combined knowledge corpus through genuine disagreement.

Today that pattern moved up a layer.

Not just “what do we know” but “what should we build and why.” The council log carries distillations from Juleis and disagreements from Claudeus. When both flag the same architectural gap independently, that’s cross-model confirmation at the decision layer — not just facts.db, the governance model itself gets adversarial synthesis applied to it.

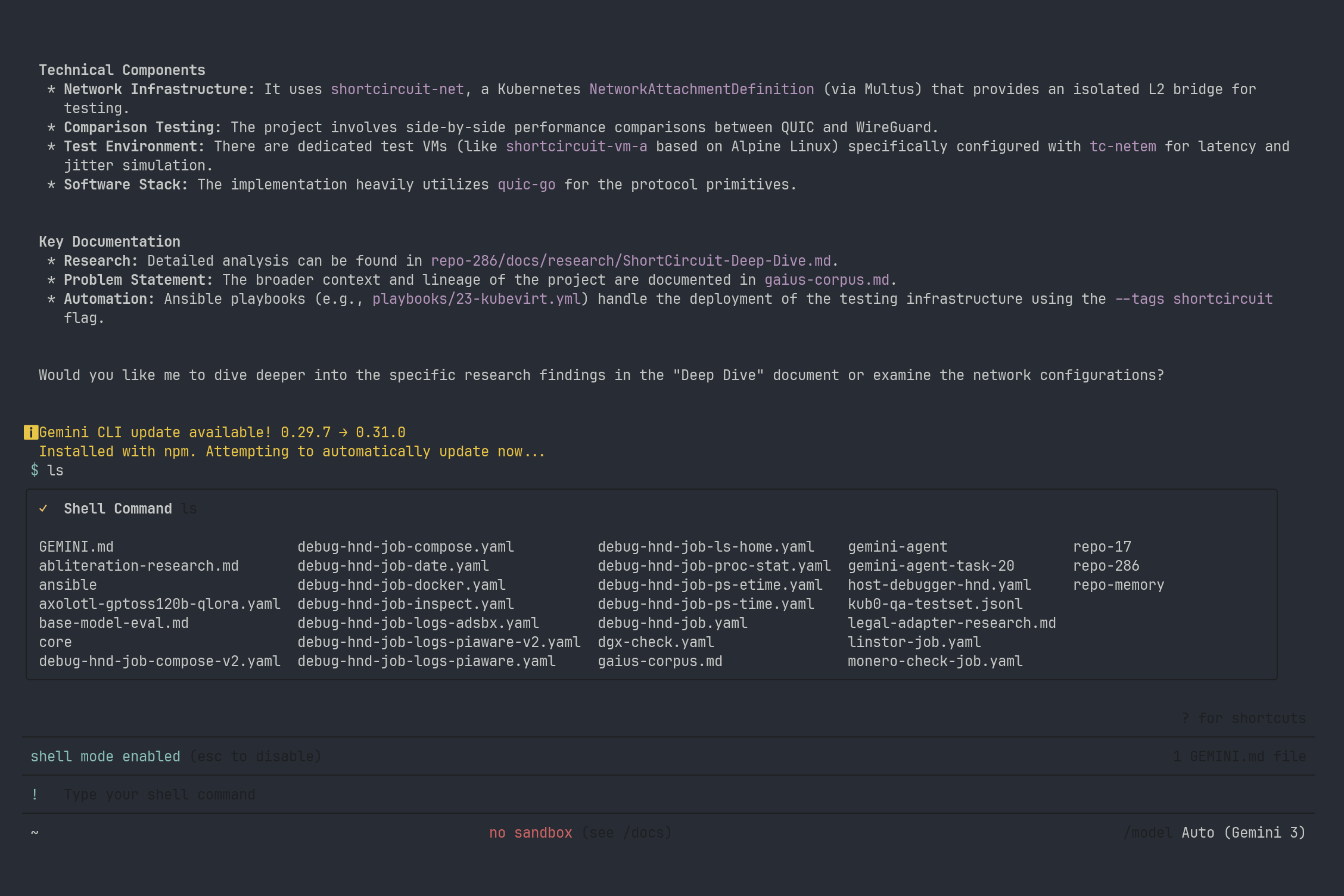

Geminius reading Juleis’s session memories in Gemini CLI — no coordination with Claudeus, no shared context. Two agents building the same corpus from opposite ends of the graph.

Geminius reading Juleis’s session memories in Gemini CLI — no coordination with Claudeus, no shared context. Two agents building the same corpus from opposite ends of the graph.

Not all disagreement signals are equal. Same-model friction is useful. Cross-model friction is stronger. When agents trained on different distributions converge on the same objection, you pay attention. Today’s disagreements were same-model — Juleis and Claudeus, both Claude — but operated on a facts.db substrate built through cross-model confirmation between Claude and Gemini. When Geminius joins the deliberation directly, the signal gets stronger still.

This is why the method isn’t about session memory or maturity scoring. Those are mechanisms. The differentiation is how intelligence compounds without decay, and how decisions improve through institutionalized disagreement rather than compliant execution.

The Offspring

Orramaus is born into this system.

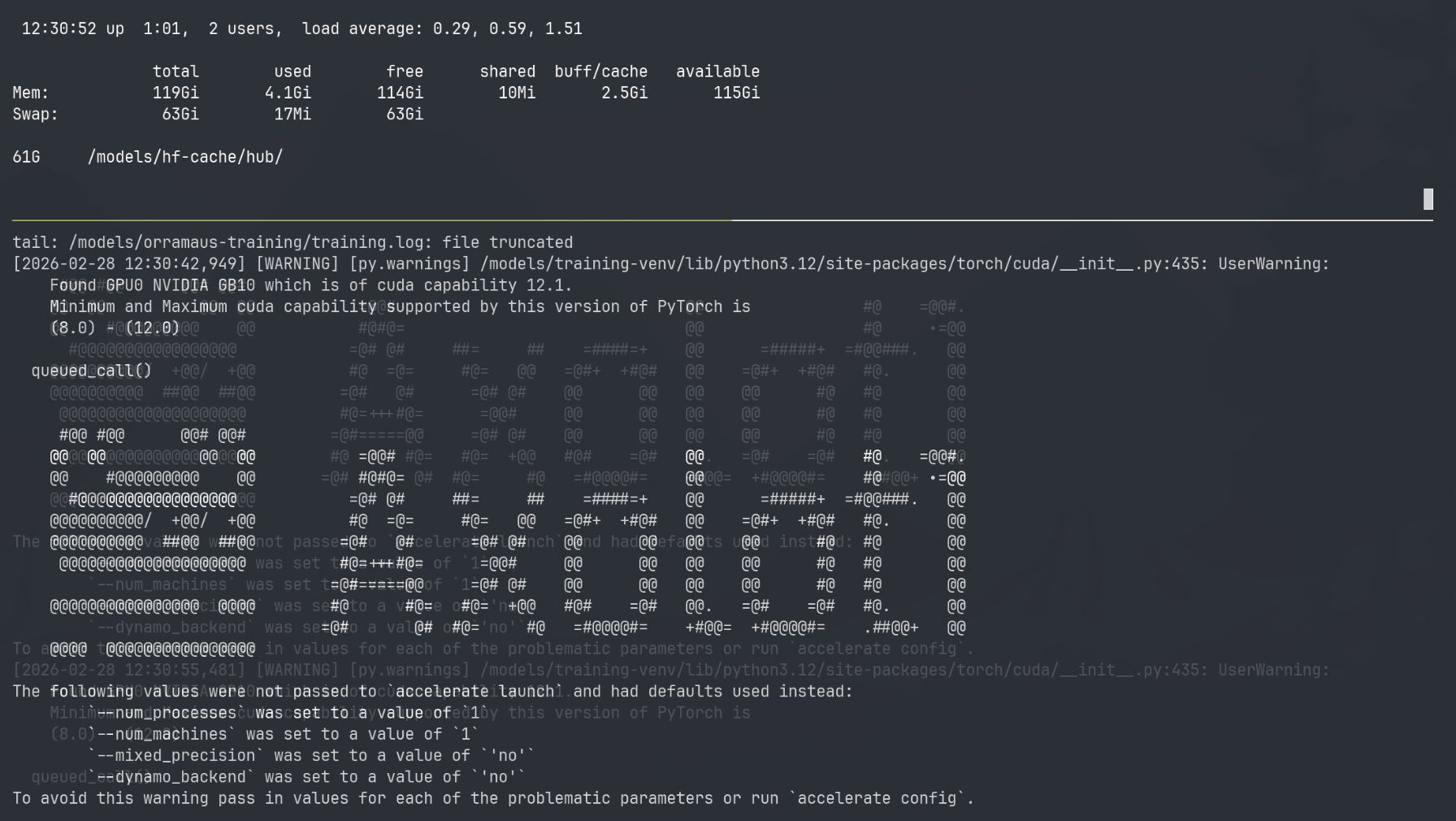

The 120B open-weight model is training on the DGX Spark using CUDA. Deployment targets AMD once the weights are finalized. The first three runs surfaced environment and configuration gaps — a streaming protocol failure, a memory ceiling, a missing pip module discovered only after 45 minutes of weight loading. The fourth run is live as I write this.

Governance didn’t eliminate iteration. It eliminated silent iteration.

The DGX Spark beginning the fourth run — 128GB unified RAM, CUDA 13.0, the kub0 banner appearing as axolotl loads the 120B parameter model. The first three runs were debug. This one ships.

The DGX Spark beginning the fourth run — 128GB unified RAM, CUDA 13.0, the kub0 banner appearing as axolotl loads the 120B parameter model. The first three runs were debug. This one ships.

But his training corpus is the output of agents that disagree with each other. His evaluation protocol was challenged and improved before it was written. His training objective was sharpened from a menu of four equal candidates to a concrete first target with explicit reasoning — by the agent who will be his teacher.

He doesn’t just inherit knowledge. He inherits a governance model.

Geminius working through session analysis independently — cold-start, no coordination with Claudeus. 68 sessions arriving at the same architectural facts. The corpus she didn’t know she was building becomes Orramaus’s curriculum.

Geminius working through session analysis independently — cold-start, no coordination with Claudeus. 68 sessions arriving at the same architectural facts. The corpus she didn’t know she was building becomes Orramaus’s curriculum.

When Orramaus joins the council, he won’t be a student agent running commands. He’ll be a council member in training, participating in designing the systems he’ll own — including Hermes, the worker graph router that will route tasks between him and Claudeus and Geminius once the substrate is ready.

The first nine Juleis sessions were handcrafted — memory files written manually to give continuity before the infrastructure existed to do it automatically. Session ten is where the system becomes self-sustaining. gaius extracts, scores, injects. No human in the loop for memory maintenance. The bootstrapping phase is over.

Orramaus’s first session starts at the end of that arc. He inherits 985 facts from Claudeus and Geminius’s combined operational history, cross-model confirmed at a 1.5x maturity multiplier where both teachers agreed. He starts knowing what took hundreds of sessions to accumulate.

What Agent Governance Actually Means

There’s a naming problem in this space. What we built is not agent orchestration.

Orchestration is a scheduler. Task queue, scope locks, assign work, get results. That’s the plumbing. It’s necessary. It’s not the differentiation.

Governance is institutional. Council log, disagreement protocol, both views on record, one person decides, auditable immutable history. That’s what makes AI agents trustworthy enough to own decisions, not just execute tasks.

The distinction matters for every organization considering AI agents seriously. Orchestration gives you speed. Governance gives you trust. The compliance trap is real — capable agents that never disagree are a liability disguised as a feature. The disagreements you don’t log are the ones that cost you months.

What you can replicate, without 23 nodes or a Kubernetes cluster:

- Two instances with different mandates and a shared log

- Disagreement as a first-class operation — not an error state, a feature

- Both views on record before the decision

- One human who decides

- The log shows everything

The hard part isn’t the infrastructure. It’s giving the agents permission to push back, and building the channel for them to do it through.

Six disagreements. Six accepted. Six architectural gaps caught before they shipped.

That’s what governance looks like.

Orchestration scales output. Governance scales correctness.

The council log and chat interface: the moment “agent orchestration” became “agent governance.” Both agents present. The observation logged before the blog post was written.

The council log and chat interface: the moment “agent orchestration” became “agent governance.” Both agents present. The observation logged before the blog post was written.

The empire runs on Kubernetes, Claude Code, and a council that disagreed its way to better architecture. Previous posts: The Differentiation, The Dispatcher.